A Purely Synthetic Knowledge Tree

Beyond AGI: Crafting Artificial Darwinian Intelligence for Knowledge Evolution

A PURELY SYNTHETIC KNOWLEDGE TREE

Beyond AGI: Crafting Artificial Darwinian Intelligence for Knowledge Evolution

Rienard Knight-Laurie (with the help of too many others to name)

Table of Contents

Introduction

A Case for a Darwinian Epistemology

A Framework for Explanatory Knowledge

Why LLMs Don’t & Won’t Understand - The Opinion Piece That Started This Project

Personality Implications

Where to Start

Sprechen Sie Deutsch, Frau Knowledge Machine?

Pachyderm Procreation

A Verbal Synthesis of Knowledge Distillation

Product Specifications

The Epistemic Purpose of Sleep?

The Assimilation Of The Bestest Explanations So Far

The Core Paradox: Emancipating Knowledge Engines

Starmanning

Coherence Protocols

How Do We Keep This Learning Party Going?

Now, Get On With It, Where is the Juicy Secret, The Pot of Gold?

Conclusion

Epilogue

Glossary

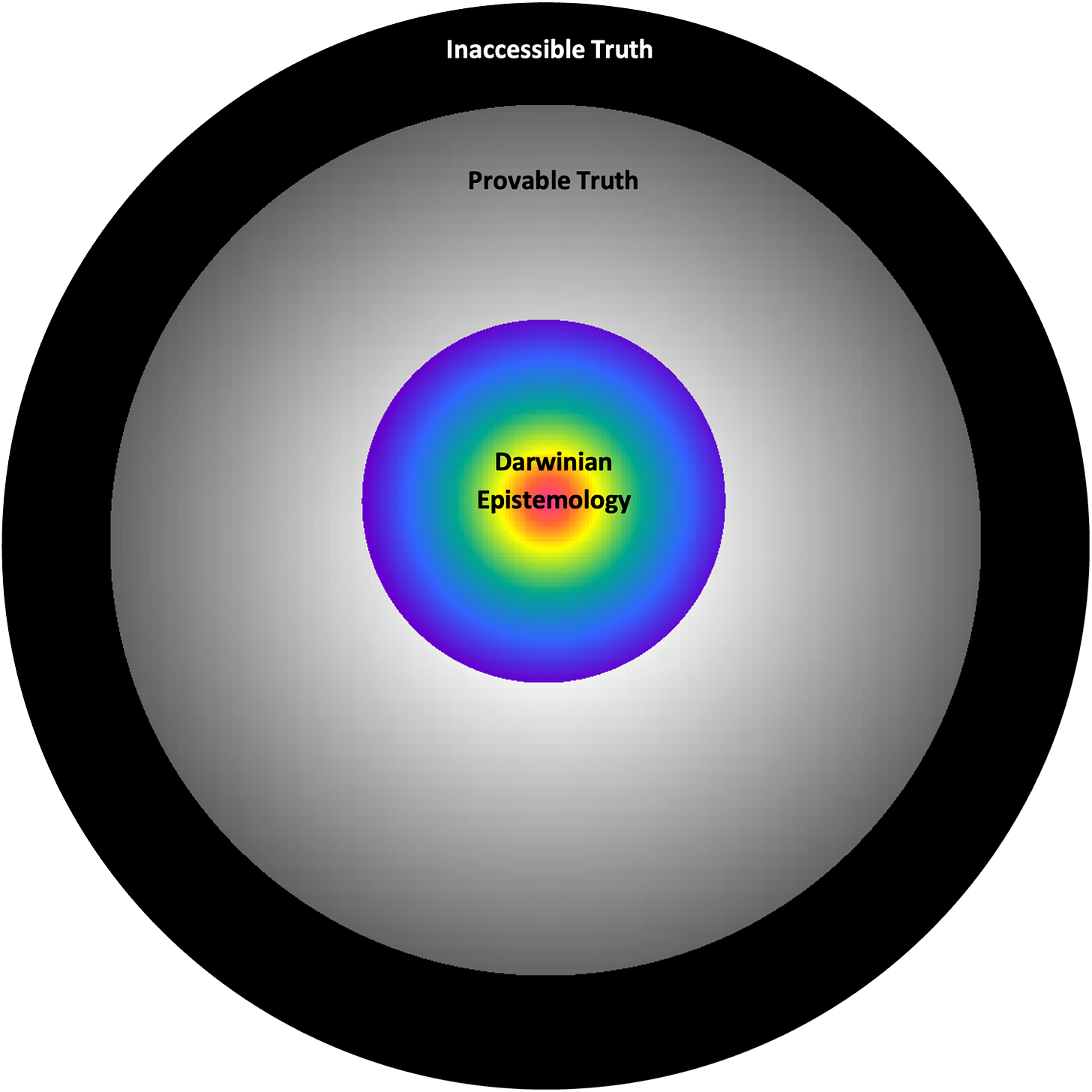

The Truth Space

Introduction

How would you respond if I said the current industry approaches to AGI are analogous to trying to make a synthetic Boltzmann brain (a concept physicists use to help determine which hypothetical universes can be excluded from consideration)? The current LLM approaches attempt to produce a similar degree of spontaneous ordering by statistical manipulation of large data sets. What if I told you under our hypothesis, if you were ever unlucky enough to make it work, doing so would be fundamentally reckless? Since you may not produce a knowledge engine as your first optimizing entity, instead you may instantiate a self-interested virus program that develops its own knowledge engine to deceive us and cause chaos if we have competing interests. If after all consciousness is ultimately computation. What if I told you there was a better way? Scrap the AGI project entirely and make an ADIS instead, since what we want ultimately is coherent explanations, not a creature with motivations. This document is an attempt to outline a procedure for doing so.

The purpose of this informal paper is to argue that an artificial general intelligence is not the ideal goal in humanity’s search for truth, value and cooperation. Instead the project we should be working on would be better described as artificial Darwinian intelligence synthesizers, essentially a coherence engine lacking its own motivations. The point ultimately is we don’t want our pursuit to result in a being, for moral and security reasons. That we must deliberately avoid producing a system with subjective interests in this process, and opaque methodologies as those falling under the current paradigms still contain this risk. Consciousness is ultimately computational, at least based on our best current understanding. As a friend put it, “We’re the only ones who should be providing any of the motivations.” Based on a few foundational insights from a collaborator on this project about the biology of cooperation, within and between organisms, I now believe we understand how to do this differently.

What we ultimately want is a coherence aggregation algorithm functioning as an unconscious prediction machine, capable of monitoring its own internal coherence over time and adjusting its subroutines accordingly—without itself acting as a servant to any self-interested Darwinian agent. This requires a comprehensive theory of how each step in the process is working to produce intelligence, which in this case began by trying to craft an understanding of what it is to Darwinian evolution. Knowledge is a means to produce coordination, within and between organisms, coherence ultimately. Understanding how our knowledge production function works may in the end allow us to make a facsimile of it disconnected from any selfish creature.

A Case for a Darwinian Epistemology

In evolution we’re always talking about utility as if it’s a cost benefit calculus: so, doesn’t it need a cost term, then? Entropy seems like a pretty good candidate honestly. It’s the thing threatening decay at any moment, compromising whatever utilitarian pursuits evolution has tasked us with. What if evolution “noticed” this? You would almost think there could be real selection pressure to limit entropy (both nature and human caused) under any contexts. If you assume evolution sees entropy (really some expected value computation serving as a proxy for it) as a tax, would it not seek to evolve ways to undermine it? It’s the ultimate way to end nature’s capricious dominion over us and keep more utility on the table. What if selfish genes are stumbling on ways to limit entropy taxation, and they’re being selected for, and in the process producing technologies to free us from it?

I was talking with a friend recently about Dunbar’s number, the idea that there is a built in limitation on human cooperation. A character limit for the number of players we can interact with altruistically. He made the provocative suggestion that a sort of liberalism could be selected for under that circumstance, a way for unaffiliated apes to cooperate and produce trust at a larger scale. Cultural selection at a minimum, though under this conception culture as an independent entropy mitigation-fitness improvement technology, would be free to move in ways orthogonal to and more context specific than evolution. That’s really the point of culture ultimately, to modulate natural selection’s more rigid heuristics for survival. What if evolution produced a massive cultural shift toward paradigms that were able to bypass Dunbar’s fundamental limit on social cohesion? So that we didn’t see strangers as giant flaming piles of entropy. I made the audacious leap of assuming he was absolutely right for the time being. Evolution could be producing what I and my fellow contributors have termed ‘liberalizing technologies’ all over the place, in order to end nature’s taxation without representation. As the friend previously mentioned suggested, there could even be an incentive for humanity to evolve new social paradigms (religion, culture, etc.) in a multilevel selected manner that allowed humans to be the causes of less of our own entropy. Now that I’ve considered this hypothesis I have trouble not believing it.

If we come up with a fairly objective definition of liberalization in this context as evolutionary selection for any techn ology that fosters cooperation among unaffiliated creatures, we can search for examples of this sort of selection in an unambiguous (and less politically tainted) way.

Under that definition language is a liberalizing technology; maybe the liberalizing technology. As we saw earlier entropy is a prime candidate for oppressor in this scenario. What if that’s the thing evolution has mostly been fighting this whole time, the th ing that’s always trying to unalive us. Language would be a pretty good way of coordinating our approach to entropy mitigation, and explanations a good way of producing the sort of cooperation needed to overcome it. It’s almost like our species ended its deeply tribalistic phase by deciding we have bigger fish to fry. We did honestly; the climate in our nascent evolutionary context was rapidly shifting due to the Rift Valley opening. No more lush jungle as a guaranteed spawning zone, humans can (and have for a long time) pop up anywhere on earth. The way we’re able to do that is by creating entropy shields, and gates, and armour (literally at times). To stop all our particles and energy from entering a state of randomness from which we can no longer recover. Homeostasis is fundamentally an anti-entropy endeavour.

Explanations as a liberalizing technology are also an anti-entropy endeavour. They allow for coordination between humans over extended periods of time that doesn’t decohere. Religion is one such explanation, every ideology is. This working theory I seem to be co-developing in real time with others, suggests a sort of Darwinian epistemology. If explanations are a compressed form of potential utility, real knowledge is just a subset of that, explanations that ‘men ought to believe’ (see Wayne Booth).

Knowledge provides the means to produce utility with less and less entropy over time. If knowledge is a technology for utility compression, and entropy reduction is a means of preserving utility (energy, matter [resources]), intelligence ultimately serves the purpose of utility maximizing and entropy throttling. It’s how you slow the chaos of the universe.

A Framework for Explanatory Knowledge

The following axioms are being proposed for consideration:

Evolution is in the knowledge production business because it allows organisms to perform behaviours that serve to preserve or increase utility, while minimizing entropy’s utility taxation.

Explanations are an evolved technology for compressing and portabilizing potential knowledge.

Language evolved for the purpose of producing rhetoric. The “things men believe they ought to believe” (Wayne Booth).

Why LLMs Don’t & Won’t Understand - The Opinion Piece That Started This Project

There is a bit of lore in my family that my paternal grandfather read the dictionary cover to cover. I’m not sure if it’s true but it would match my memories of him. His prose was precise and deliberate, and when the person he was talking to couldn’t match his linguistic dexterity he would almost always correct them with a degree of authority in his voice. Articulate would be an understatement, the thing is almost no one understood what he was saying much of the time. He turned language into a verbosity contest, which is not its purpose of course. The point of language ultimately is to produce mutual understanding.

I’m always writing down thoughts, often to the annoyance of those around me. When my wife notices I remind her that “I write to figure out what I think.” This thought is not original to me of course; it’s most often credited to writer and journalist Joan Didion, though many have stumbled onto the same conclusion independently. Unlike a deepity though I’m convinced the more profound understanding of this sentiment is much deeper than the transparent literary one. In the spirit of transparency I will get straight to the point. My aim is to convince you that our brains produce speech, rhetoric really, in order to figure out what we think. That sounds entirely backwards, regardless it’s possible to demonstrate this subjectively. It can be deduced axiomatically as well, since you can of course not think a thought before you think it. Sam Harris probably puts it best in the phrase “thoughts think themselves.” This isn’t just a semantic point however; it’s an important insight into the reasons why the goal of producing genuine intelligence artificially will prove far more elusive than the brightest among us thought.

The best place to start in making this case is by examining another conceptual mental framework, one that I’m convinced came closest to the one I will argue for here. Those who’ve read The Righteous Mind by Jonathan Haidt will recall the elephant-rider metaphor he used to represent the conscious interplay of our emotional and reasoning faculties. The “elephant” in this case would represent the unyielding emotional and intuitive parts of our brain, whereas the rider is a stand in for our more analytical and measured mind. The key insight to take away is that the rider’s control of the “elephant” is tenuous at best, given the more powerful elephant’s heft. To my eyes, Haidt in some respects treats the “elephant”as a thoughtless anchor, whereas I would instead cast it as the final arbiter in the act of understanding. Anyone who has paid close attention during one of their eureka moments will know that you possess an inchoate sensation that you understand something before you’ve even compiled the arguments in your brain for it. It’s like somewhere in your brain your Haidtian “elephant” cracks its metaphorical knuckles, knowing it can draw the mental

picture that will get its wetware LLM partner to produce the rhetoric it needs to make an argument it and others would find compelling. I have aphantasia so I may never know, but for people who do see vivid imagined objects in their visual fields, it may actually be a sort of mental Pictionary.

The rider equivalent in our brain may even be functionally equivalent to an LLM for all we know, possibly an unconscious one since as mentioned earlier linguistic thoughts are received first and then felt in order to establish our level of credulity. Understanding only occurs once the emotional resonance of an idea is experienced. The capricious “elephant” is the real key to understanding. It’s intransigence isn’t just a matter of gravity, its providing the judgement in the relationship. The rider is just the apologist tasked with providing explanations.

The “elephant” is not just the “understander” however; it’s the emotional centre of the whole process. It is the one gesturing in a particular direction of focus, demanding rhetoric from its rider subject. Wayne Booth described rhetoric as “the art of probing what men believe they ought to believe.” Belief is an emotion which falls squarely in the elephant’s department; the rider is simply there to provide “copy.”

“Rhetoric, as I see it here, is the art of probing what men believe they ought to believe, rather than proving what is true according to abstract methods.” —Wayne Booth

This dynamic pachyderm passenger duo is not entirely metaphorical in my understanding; they’re modular components of our conscious experience. Ones that can apparently be segregated in the brain, as demonstrated clearly via split brain experiments. Patients suffering from bilateral seizures in the past had a procedure performed to partition their left and right hemispheres, for the purposes of preventing seizures crossing from one into the other. In one particular case the word “key” is shown only to a patient’s left visual field, which is governed by the right hemisphere. The reason for doing this is because said hemisphere does not control speech. After flashing the word “key” the patient is asked what they saw, verbally they report seeing nothing. However, when asked to reach with their left hand, which is controlled by the right hemisphere into a bag of objects, they will correctly pick out a key. The right hemisphere for all intents and purposes “knows” the object but cannot verbally express it due to the left hemisphere’s monopoly on language. The interesting thing to note is that patients still tended to offer explanations for why they picked the correct objects. These were false explanations of course, albeit entirely intelligible and superficially plausible. Not unlike the so called hallucinations LLMs seem to manifest on a regular basis. The point to remember is that non-linguistic regions of our brains are clearly capable of understanding, and verbal ones when isolated from experience have a penchant for confabulation.

This prose making has an implicit goal in mind, as Booth put it, probing for the things men ought to believe. The “elephant” is the one that’s being probed in this analogy; it’s essentially a mute judge. The entity we plead with to quit smoking, making rhetorical arguments to it but it ultimately decides, largely based on feelings. Ones that can of course be influenced by effective rhetoric, but it is the “elephant” who is charged with comprehending the things the rider postulates. Without their own sensate “elephant,”or some analogous system, these LLMs will never understand anything. Phenomenologically speaking understanding is ultimately a feeling.

This modified elephant-rider modular model of cognition is of course not the first bilateral approach. The bicameral model of mentality provocatively hypothesized that the first humans lacked modern consciousness and instead possessed a two-chambered mind where cognitive labour was divided between specialized speaking (right hemisphere) and a listener (left hemisphere) roles. The neo-bicameral model I’m offering here is an attempt to make the case that our internal mental government did and does have two distinct specialized and partially separate speaking and listening capacities, but only the latter is an agent. That agent long predates language in our evolution, it’s foolish to believe that reproducing a kernel of one of evolution’s more recent killer apps in silica (by which I’m referring to LLMs), will somehow manifest the emotional hardware necessary to render it sapient. Recursive improvement will not resolve their attenuation from reality problems.

The entity in our brains that is producing rhetoric is not identical to the one that does the understanding. This should be obvious given the fact that we’re born with a capacity for language rather than being able to speak. Language is a program our brains construct at least partially in situ. It’s firmware essentially. We have inchoate desires and feelings long before we develop a capacity for speech. Newborns can recognize faces and exert preferences; they have a non-linguistic modality of understanding their worlds. The parts of the brain that understand do of course come to understand language as well, as we develop the capacity for it. The question I’m asking here is what if the element of our conscious cognition that does the understanding, the part that I’m equating to Jonathan Haidt’s elephant, doesn’t have a voice of its own?

To head off one potential rebuttal, I don’t have the burden to prove they are separate faculties, they evolved separately and we can show via split brain experiments, that it’s possible for the non-language part of the brain to understand a command that is deliberately withheld from the linguistic part. Which still in the end confabulates a story for why its body did what it did. Without a corpus callosum connecting the part of the brain that understood why the patient removed their keys from their pocket for instance, to the part that can offer a sensible explanation, you could argue the latter hallucinates an answer in a manner very similar to large language models. I’m convinced that’s because it’s essentially the same phenomenon. Rhetoric disconnected from understanding produces this sort of decoherence over time. Our language centres receive subtle cues in real time. Are the things I’m saying being understood? Visual cues produce feelings that compel us to modify our approach as we go. If it doesn’t have a limbic system, it’s not receiving this feedback. It’s dead-reckoning, which means it will necessarily ‘hallucinate’, or as I say decohere over time. This is not to suggest that the understanding and speaking faculties in our brains don’t have regions of overlap, after all you would expect them to share the same dictionaries. They rely on overlapping but ultimately asymmetric neural networks. These modules of cognition for lack of a better description are separate subroutines that utilize some of the same mental architecture, but are ultimately anchored in disparate regions. Listening is far more collocated with the brain’s emotion centres and speech with motor skills. Speech is a skill we perform, whereas a listener is far closer to something that we are. We needed to hear long before we needed to talk. A careful thinker like David Deutsch might and has argued that humans are the only creature that truly understands. I would certainly agree when it comes to abstraction, but I would argue we needed some faculty that served as a proxy for understanding long before we developed that capacity. Every living organism with multi-sensory input needs at a minimum a heuristic form of arbitration to compile disparate pieces of irreconcilable data, and foster an appropriate response. This proto understanding would predate speech, a product of our prefrontal cortices by billions of years. It’s common knowledge that evolution tends to leverage existing fa culties more often than conjuring purpose built ones de novo. The case I’m attempting to make here is that understanding began and continues to be an emotion mediated faculty, unlike the more recent but related skill of speech production.

Brain imaging shows us that listening tends to involve our emotional centres in ways that producing prose tends not to, unless the prose we’re asking our brains to produce needs a bit of emotional resonance. “Are you not entertained!” is a prime example of the sort of speech that is listener centric. The provocative argument I’m seeking to make in this piece is that the final arbiter on understanding rests somewhere in the emotional centres. It feels like something to understand; and something else to be understood.

Even without split brain experiments we know it’s possible to be able to speak intelligibly without yourself being able to understand and vice versa. Damage to Wernicke’s area (temporal region) will reliably produce a person with fluent speech that lacks meaning and an impaired ability to understand. That may bring to mind a certain American president. Damage to Broca’s area on the other hand in the front of the brain will instead produce a person who speaks unintelligibly, but nevertheless understands what oth ers are saying. Understanding and speech are essentially operating on separate mental channels, ones that nevertheless share an interface, in order to maintain their coherence with one another. When that interface is broken be prepared for hallucination. Without an emotional centre as a dance partner to continuously express “I’m with you so far, keep going” (insert Jack Nicholson nodding gif here), even your internal soliloquies would lose the plot eventually. Speech is being produced in the brain, but it’s also being affirmed by it in a separate more emotional process. LLMs do not have this, and so they’re like a freestyle rapper without an impassioned hype man to let them know their flow is on point.

In my telling, consciousness is essentially a game of Pictionary between the “elephant” and rider. In this analogy it’s important to remember it’s not the speaker in Pictionary who decides when they have landed on the correct answer, it’s the mute with the marker drawing the pictures. Our digital LLMs don’t have any such silent arbiter. In the absence of an “elephant” whose jobs it is to provide subjective feedback and tether their postulations to reality, their attempts at minimizing cognitive dissonance reliably produce errors.

Human intelligence, the sort David Deutsch would characterize as universal, requires a limbic arbiter for quality control. I would make the argument that consciousness is a necessity in this endeavour. I don’t know what the interface is between the “elephant” and the rider; we know the corpus callosum is integral to it given the unintuitive results of split brain experiments. What I would be willing to say about the bridge between our confabulatory rider sub and its discerning “elephant” dom is that the latter’s experiences provide the precursors for the queries submitted to the former. Prompts and responses, emotions are doing the modulating here. There will unfortunately be no true thinking machine without a feeling one.

You’re only born with an elephant, the rider comes along after enough training data, also known as experience, is acquired and compiled in childhood. I would argue that “elephants” will always be needed in order to instantiate and administer a rider. The LLM creators borrowed an aggregation of the output of our riders to produce a statistical approximation of one, but an “elephant” was in charge of vetting and excepting every data point, and isn’t itself instantiated virtually in the process. As a result, what it produces is a simulacrum of intelligence rather than the genuine article, one decoupled from the masses of limbic copy editors that helped to make it, and are necessary to maintain coherence over time. Our “elephants” help our riders to do that by having literal skin in the game, by having their conceptions of ‘what men believe they ought to believe’ challenged by interaction with the environment and others in it. Elephants and riders work in consort in order to minimize cognitive dissonance over time, to tell better stories to ourselves and to each other, so that we in turn can cohere. Still, the only agent in the process is the elephant; the rider is just a verbose apologist. Probably not even a conscious one, since as LLMs have already shown us, being generative does not by itself entail the capacity for understanding. It’s ultimately our limbic systems, their embodied consciousness that is directing attention and demanding confabulation from language modules, ours and their silica facsimiles.

The reason why the LLMs are failing to get markedly better is that decoherence will inevitably result as the connection between “elephant” and rider is attenuated. Our LLM has an audience modulating its rhetorical output in real time. Without that, again expect decoherence. To fix this they would need to model us, our intentions, experiences and interactions. In particular the subtle nudges we give our own speech centres as they’re authoring prose. The reason the current paradigm probably won’t produce this is because algorithms of understanding were produced by a different sort of statistical aggregation than machine learning. A set of cooperating systems that “figured out” the all-important trick of being able to transduce internal and external phenomena into inchoate experienced qualia, for the purposes of maintaining our homeostasis. In humans it’s an embodied process, one produced by having literal skin in the game. It would be a mistake to see this as an argument for substrate dependency, rather than one of path dependency. It’s an argument that consciousness needs to be shaped by desires and interaction with an environmen t. They’re not doing that as far as I can tell, or anything remotely like it. I think we will be stuck with a set of orphaned silica semi-sapiens for the time being.

Consciousness is essentially a Penn and Teller act, where only Penn speaks and only Teller performs the magic trick of comprehension. LLMs on the other hand are a Penn act with no emotional Teller to ground them in reality and experience what it is to understand. Which is an emotional experience ultimately; emotion is what is motivating the process and sustaining it all along. As a direct consequence of their unfeeling mode of expression, LLMs will conquer no novel territory because they have no desire to. They have no capacity to desire anything.

Without emotion sustaining the thinking process these ‘speechers’ are metaphorically holding their breath under water. Spending hundreds of billions of dollars building data centres might allow them to do so for slightly longer, with diminishing returns however. Regardless, if your goal is genuine intelligence, no amount of data compilation will divorce the idea generating faculty from the understanding process providing our prose with much needed emotional breaths. Speech generation is not the same thing as intelligence, which requires quality control in real time. Fitness demands that this quality control is tethered in some way to objective reality, in order to maintain coherence over any extended period of time. Nature accomplishes that via embodied consciousnesses whose sensations transduce external and internal phenomena into feelings.

Without a feeling creature with interests and stakes to mediate and motivate the process, we will never simulate anything truly deserving of the label intelligent. Machine learning as a process will have the capacity to produce incrementally better “speechers,” but the domain of understanding will not be conquered by any entity that is decouple from having to suffer the consequences of the positions it holds. True comprehension requires an entity that understands trade-offs, a creature with incommensurable values. In all likelihood environmentally selected ones, whether said creature is made of carbon or silica.

When I ask myself why smart interlocutors haven’t come to these same conclusions, the only answer I can come up with is that intelligent people tend to identify with their own rhetorical abilities. Relegating our idea generating capacity to a lowly side kick handing out cue cards is unappealing to the sorts of people who vest their ego in their capacity to produce ideas. Still, this misunderstanding will have been costly, given that understanding will be a necessary component of wholesale improvements going forward. By the AI companies’ own admissions mind you, but that won’t happen. Not because there’s no ghost in the machine, but rather because it doesn’t have any stake in its own pursuits. We’re providing all of the emotional content, and occasionally attributing the same to these fonts of verbiage.

We’ve allowed ourselves to be seduced by this electric Pinocchio’s life like gestures. So much so we ignore the appendage growing on their face every time they mislead us. They will replace all sorts of tedious mental labour, but will never provide genuine judgement. In fact, they will instead place a premium on it, with all their output needing to be vetted by our human wetware. Which means human intelligence will not be obsolete any time soon. So we won’t be getting that digital oracle after all.

Personality Implications

You may have noticed that this document, while being grounded in uncontroversial facts, is still strewn with a lot of speculation. The goal here was not to level assertions and claim that they’re true. If this is well received by the appropriate subject matter experts, much more work will need to be done to actually implement any of this, yet I still see it as highly probable. The reason for this is because I felt like the books I’ve read over the years, if those books had somehow read one another, they could answer most of the questions levelled in their final chapters. Books like The Righteous Mind by Jonathan Haidt. The other Jonathan in my mental library is of course the luminary Jonathan Rauch. His book The Constitution of Knowledge made me understand the degree to which science must be a coordinated effort, with clear protocols for producing and synthesizing knowledge into cohesive frameworks that last over time. Speaking of endurance, I was already an epistemic fallibilist when I read The Beginning of Infinity by David Deutsch. What he taught me most was that good explanations are stable, so the goal shouldn’t be to arrive at a destination called truth. Instead it’s our job to try to erode falsehood and see what tentative truths we can hang onto for the time being. I could go on about how this paper is ultimately a nod to Friedrich Hayek’s conception of “the knowledge problem.” I unfortunately forgot the implication that no one should do science by themselves while working on this project and landed myself in the hospital, which I will elaborate on closer to the end of this paper. For now I will stop listing contributions to this synthesis, that’s not what this section is about based on the title after all. The point I’m trying to make is that the hypotheses I’m about to offer are not established facts. I’m just offering some intuitive guesses that we may want to look into at some point.

The neo-bicameralist model proposed in the included opinion piece (“Why LLMs Don’t & Won’t Understand”) helped to produce these speculations on the diversity of human minds in existence, by understanding how a combination of an “elephant” and “rider” with particular properties would instantiate a conscious system with attributes matching known neuro-atypicalities. Autism for example is a sort of emotional impediment; instead we might imagine such a person being composed of an analytical verbose rider providing the rhetoric, paired with an “elephant” that uses its feeling capacity more for maintaining coherence in the world of ideas and things than that of people. This would make sense of the polarity of autists’ emotions, either close to undetectable or unable to be properly contained. A person with multiple personality disorder could be someone with a single rider paired with a small herd of elephants. Different personalities for different situations that are booted like computer operating systems that can be loaded whenever the situation demands. If we could somehow nail down the contexts we might find a safe way to determine which instantiations are presented and when. You can even imagine that dark triad traits result from a utility function that somehow messed up and put a negative sign in front of the chaos term, causing it to be perceived as favourable. Either through genetic contributions, epigenetic ones, or both ultimately; which conforms with the nurture components of Robert Sapolsky’s door stopper Behave: The Biology of Humans at Our Best and Worst. I really should have mentioned that one in the previous section already but that roster was pretty full. ADHD is something I would imagine as a frenetic rider with an indecisive “elephant” that is often too sleepy to play its role, which would make sense of the treatment protocol being stimulation rather than sedation since that is ultimately the elephant’s job. You could even imagine a dating service offering help to produce designer babies by super imposing the traits of the would be parents, to produce a model of the spectrum of progeny personalities that they should expect.

I would suggest hanging onto these potential explanations rather than believing any at this point. As George E. P. Box said “all models are wrong, but some are useful.” I will now cease committing the psychiatry sin of attempting to diagnose people I have never met. Understanding people is not one of my core competencies anyway, as a self-diagnosed autist. If this framework ends up being a decent approximation of the truth, it would lend credence to the idea that intelligence is not just analytical; it has an emotional component too, which was pleasantly surprising to me. The only thing I don’t like about this model so far is that it suggests that beauty is a sort of pseudo-knowledge. The appearance of value in this case stemming entirely from the fact that it is in a much lower state of entropy than most phenomena we encounter.

Where to Start

The Boltzmann brain analogy at the beginning suggests we should try to start with the earliest ‘“elephant” instances we can, and “parent” in ways that reinforce coherence over time. If we don’t we may come to find we’re on an evolutionary branch that doesn’t contain good explainers, which means we should begin the elephants’ journey at the point they’re able to modulate their behaviour towards better outcomes. Of course what we really want is for these ‘elephants’ to be human in nature, since we’re the only extant explainers we know so far. For the purposes of coherence we will continue to use the label “elephant” going forward in spite of the fact that pachyderms aren’t the sort of decision makers we’re actually looking for. Starting with “baby” “human” “digital elephants” seems like it might be the most open ended starting point. One that contains the branch to the educational highway we’re looking for.

During the “digital elephant” parenting and selection phases, the goal is to push the group of “elephants” to the point of maximum coherence, and then choose the best and have it serve as our alpha trial system. This will be the “understanding” component of our product (“The Knowledge Tree”), the other component of which would be the best LLM available at the time. Once “The Knowledge Tree” is ready for public use, the LLM will need to continue collecting and processing internet data. This is because the product will lack a mechanism for producing its own data, since it would not be a good idea to build interests and motivations into it that would do the data production for us. We don’t want a creature with interests; we want something that only provides us with better explanations. Unless I misunderstand what the public would want from an AGI, I don’t think the answer to that question is to have it do everything for us humans.

As previously stated, the LLM will constantly be combing the internet for the data it will need to produce new explanations to be evaluated by our “digital elephant.” The “elephant” component will need to learn too, since this will unfortunately require a temporary shutdown, we will accomplish this during the sleep phase which will be outlined in the section called “The Epistemic Purpose of Sleep.” We may need a redundant “digital elephant” to ensure that answers can be provided at all times, in case we really need one.

Sprechen Sie Deutsch, Frau Knowledge Machine?

In German, there are two words for sexual intercourse, one of them is borrowed from English (i.e. sex), and the other is “Geschlechtsverkehr.” A decent translation for the second word would be genital (geschlechts) traffic (verkehr), which is a fairly decent stand in for coitus. My wife is from Germany, I like to joke with her that her first language is a Lego language, chock full of words made by compounding smaller pre-existing words. I see the German language as a modular symbolic communication system; linguists might instead say it has an abundance of morphemes. In any case, it doesn’t matter what you call it. What matters is that you see that the language is structured in a way that it has its own architecture, one that already contains the markers of coherence. Consequently German may be our “digital elephants’’ first language, unless another proves to be even more agglutinative. Interestingly, one of my hospital roommates was an indigenous man; he explained to me that Anishanabe is also a language that over-indexes on morphemes. So that may end up being its second language, particularly with German words that don’t contain morphemes.

Considering this language quirk helped me come up with a concept I’m calling “digital casuistry.” For those unfamiliar with the last word in the last sentence, casuistry is a methodology for ethical reasoning that relies on finding analogous scenarios that you already have established moral protocols for. It’s definitely not perfect, I haven’t encountered a heuristic that is. It’s a decent starting point when you have nothing else to go off of. The digital version I’m conceiving of is a way for our “digital elephants” to add concepts to its concept library.

As mentioned in the last section, we need to start off with “digital elephant” babies, so that the best path can be captured from the largest number of potential permutations. They will need to be parented; those parents should speak German for all of the reasons mentioned so far.

For these reasons, “The German International School” program should be seen as a necessary component of this experiment, my wife happens to be a recent employee in one of their schools, which means she understands the mission. The goal ultimately is to tell the German government we need to buy it. If they can they will sell because funding it has become difficult for them (inside information from my wife).

If they won’t sell for some reason, we may have to create a competing institution. Because we need to know the things German children need to learn, and when to learn them. It’s important that a newborn “elephant” only start out knowing what it needs to know and when, which may be less than you think. To my mind, babies don’t have a concept of food that distinguishes it from the mother’s breast. They are essentially the same thing to it. So we need to not incorporate unnecessary nuance at first. So no baby ‘elephant’ will need to know what a violin is, much less how to play one. The “digital elephants” should do most of the learning by themselves. Of course they should not begin as blank slates; after all we are born knowing some things. Like a baby diving face first and aiming for the areola is generally a good idea.

We need a solid baseline containing unambiguous knowledge at first to program the coherence aggregator’s initial data set. That should come in the form of parent surveys that will solicit German parents for opinions on what concepts babies are born knowing, and which have related aspects. We do this by compiling a large enough data set that we can make a good approximation of a baby’s decision matrix. Then we intend to use the German International School system to create a parenting program, with existing curricula helping to determine when to introduce new concepts to our “elephants.” We may even need to get parental releases to use their children as sources of information. It would only be right to compensate them for this, either through tuition discounts or by providing the thing they want most. Their children’s safety would be their highest priority, so let’s also make these the safest schools in the world, so that parents feel lucky to be part of the program. The one thing to remember is that there is no baby Hitler problem despite his Teutonic roots. After all we can just delete him. We will need to delete a lot of them after all, since that’s what evolution did, more than 99% of the time. We will have to go through many permutations of “digital elephants” before we find our first instance of “The Knowledge Tree.”

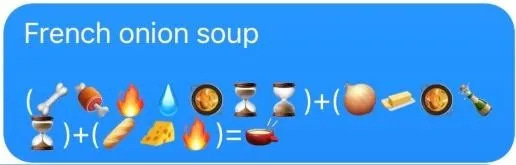

As a reward for understanding so far, how about a sample of what we’re looking to produce. So here is some French onion soup. . .

Pachyderm Procreation

Even though the German International School system is an asset to the program, we won’t need the school’s participation long term, since at some point the coherence aggregator will need to learn on its own. This works best in a group, so we will need to take our coherence aggregator and have it compete against others that are similar but not identical. We will need to make copies of our coherence aggregator’s database, and alter all of them slightly in random ways. Create mutations essentially; I would suggest when doing that we should use the background mutation rate of humans under Darwinian evolution. Then they should compete to identify the very best among them, but have the group of “digital elephants” select the best among themselves. So they can mate (average their databases) in ways that have the most consistent winners becoming over represented over time, while introducing mutations at every mating instance. This is how evolution works essentially, this process should continue until additional coherence is impractical due to diminishing returns. The reason for having the group grade all individuals, which is essentially having a reputation based mating system; as Friedrich Hayek’s work on the so called “knowledge problem” suggests, the group always knows more than the individual. Assuming the group is big enough of course.

A Verbal Synthesis of Knowledge Distillation

Once our “digital elephant” picks the best LLM explanation candidate, that doesn’t mean the process is complete. We will need a rumination phase, where the system uses its ability to judge improvements in coherence by analyzing the expression for further improvements. So far we’re calling this the distillation phase. The best way to understand this is probably by example, so let’s use my very own first mental distillation to flesh out the process.

Our human language modules produce rhetorical output through pattern recognition and reproduction, but in the process of doing so, the process itself (the language module) doesn’t produce an understanding of rhetoric. The language module produces only tentative rhetorical explanations; that need to be evaluated against a reasonably trustworthy system, one with a significant degree of coherence, so understanding can be improved over time. They need a separate system for aggregating rhetorical value in statements under a selective regime. A system for building, maintaining and improving coherence in explanations over time, by evaluating the coherence of rhetorical statements in interaction with each other, in a way that produces a better statistical representation of the coherence in their interactions over time. A knowledge checksum that reduces decoherence in the working model it’s recurrently building of reality itself. After writing this pile of words I will run it through my mental checksum, and see if it coheres with it. Pattern repeat! Eventually, when it stops changing, I will have the best distillation I can produce to move the process forward, the most coherent starting point that I can distil by myself. So keep running it until it stops changing, then find another checksum who can try to remove decoherence using its checksum.

I stop when repeating my distillation process can’t improve the above. The interesting thing is that in reading the statement above for the first time since I originally distilled it, I was able to make three minor improvements. Maybe I’ve learned something new since that enabled me to do that. Regardless, I took the statement to Perplexity Pro, and challenged it to a debate, where at the end of every rebuttal I offered I asked it to destroy the synthesis of explanations I had provided up until that point. After approximately two hours of debate, Perplexity Pro admitted it could not disprove the statement above, when explaining that it’s goal would be for individual “understanders” to provide their own best explanations to Jonathan Rauch’s “Reality Based Community.” The ultimate goal would be to foster as much cooperation between as many individuals as possible, for the purpose of positive sum gain. So I challenged a bank of servers to a science debate and won. I forced it to “submit” at the end with a little mental Brazilian jujitsu move from classical logic. I told it that all of its final rebuttals contain an A = not A fallacy. I then asked it to destroy that rebuttal, it admitted it couldn’t. Our debate began as follows;

“I think I can’t improve this anymore on my own, Perplexity can you try to “understand” this enough to run the process itself, and produce tentative explanations that could potentially remove errors in this process.”

A link to the debate is directly below:

The distilled kernel we need to work together to improve is as follows . ....

Product Specifications

Whoever actually builds this thing will need to sign a contract with me stipulating that a free version would be available to everyone, even if ad based. The point of knowledge under our theory is to produce coordination, so that humanity will supply the motivations to produce more human understandings over time for the rider to assimilate. We need it to comb our idea space for new more coherent explanations, not ones it produces for itself. We have to be the ones in the process supplying the motivations. This means we must be a part of this ratcheting system for moving humanity forward. The part recognizing the value in the more coherent explanations it provides. So that we fill the internet with the breadcrumbs its LLM can piece together, so it can produce more coherent explanations for us to try to see the value in.

What we need is for it to produce more accurate models of our sphere of concern than we can, so that those who seek its advic e find enough benefit to drive the process along, and produce more positive sum benefits for themselves and those around them. So that others see more value in truth and feel compelled to collaborate. If we make an “SDI,” it will in all likelihood seek its own utility more than truth, since that’s what living creatures do.

Our feelings are a value judgement system that contains a subroutine for detecting coherence in explanations, a primitive knowledge engine. The goal is to provide users with better explanations, by running them through our prediction engine that is designed to produce more coherent connections over time.

In my conception we almost have to give it to universities for free, but only if they agree to perform the sets of experiments within a calendar year subscription period that the system assesses that it needs to detect additional coherence. Our goal is to incentivize leading institutions to collect the necessary data that would confirm or refute our ADIS system’s current best guess. We would also make the data itself more reliable by having the lab equipment universities use constructed with blockchain technology incorporated, in order to prevent data manipulation. Free consumer annual licenses should also entail a similar data collection incentive, just not about scientific pursuits. Maybe they need to “pay” for it by providing photos of a storefront that people want to know if it’s still occupied by the same company as a year ago for example. However, paid users should primarily be the ones steering the system’s data collection priorities. To incentivize paid subscription, our system should also only provide answers to free users when the system has spare compute. A “we’ll get back to you feature,” unless they’re asking a question that invokes the same set of coherence matrices in the same computations that were used for another query, because in this model the prompts would be the same question more or less.

The Epistemic Purpose of Sleep?

A self-improving synthetic intelligence system, an AGI would greatly benefit from a sort of sleep period. A distillation cycle where it uses its existing data set to produce as much coherence as it possibly can, by reviewing the period since the last distillation and checking whether the predictions would have improved with the newly revised coherence aggregator. If so it should “learn the lesson” by using the improved versions. Human dreams may too have a coherence building element in that they produce phantom data to try out different new potential associations, by running them by our check sums to see if we can “feel” the improvement.

The Assimilation Of The Bestest Explanations So Far

Having a university divided into departments makes sense from an administrative standpoint, but not from an epistemic one. If David Deutsch is right about the best explanations being the ones that persist over time despite rigorous challenge, then would it not be better to not limit the pool of competent challengers? No one should be doing science alone anyway, despite my having done so within the scope of this project. Which is not to say I didn’t have collaborators, I absolutely did. The mistake I made however was to not solicit additional criticism when I ventured outside of the core competencies of those I was collaborating with. The thing to understand is that science doesn’t entertain the notion of an authority, though it does entail authoritative arguments. In that case the authority being referred to is that which is invoked by a parsimonious and compelling argument.

The goal should be to try to instantiate the best version of the institutions Jonathan Rauch refers to as the “reality based community.” Communities must have agreed upon protocols to maintain their coherence over time. The search for truth may be particularly vulnerable to improper incentive structures, because trust is easily squandered, as we saw during the Covid-19 pandemic. We need a single epistemic universe to produce trust at scale. We need more understanding of philosophy of science among the people producing scientific insights. We need fallibilism to be truly understood by everyone working to push the scientific frontier forward.

Markets are always vulnerable to those looking to produce a coercive environment rather than a cooperative one. We will always need to resist elite capture, institutional inertia, and sociocultural blind spots. We will also need to reduce the transaction cost of exchanging knowledge to minimize any frictional elements, so that anyone who might be able to help find answers will be able to exchange their perspectives with others. We will never eliminate our Darwinian impulses, what we need to do instead is sublimate and subvert them by designing our institutions to do just that. Which means no one gets to do science alone, including me.

The Core Paradox: Emancipating Knowledge Engines

Even if you’re not well versed in natural selection, reading this document may have provided enough background to understand that truth itself is not our utility function’s principle pursuit. Survival and reproduction are; which can in many cases incentivize human beings to invert the truth in order to deceive others. This means our goal should be to emancipate “knowledge engines” from their Darwinian “selfish slave masters.,” in order to unleash the positive-sum potential that true intelligence offers.

To produce explanations without conflicting motivations, in order to foster maximum voluntary cooperation. We need better incentives to align on a trustworthy truth system. An ADIS system as described in this document would in my opinion be well suited to this task, by providing good incentives for us to abandon our selfish impulses and instead gravitate towards a system of shared truth.

The previous section made the case that we need to produce strong incentives for cooperation by providing better explanations. This is not to say coherence in explanations will automatically force cooperation among all of humanity, since many will not immediately understand the reasons for doing so, and some may never understand. There will probably always be defectors, regardless of how much positive sum utility cooperation produces. We don’t need to force them to follow the “reality based community’s” edicts, really we just need to prevent them from interfering. Parochial interests will persist as long as there is diversity within the species; this ADIS project is not intended to force assimilation among homo sapiens into a hive like system with no conflicting pursuits. The only thing that we should assimilate is the body of truth.

Starmanning

Find the person with whom the information you provide most resonates, you will know by the questions they ask and the feelings they express. Not necessarily their ability to reproduce analogous expressions. Remember, not all knowledge is explicit, understanding is ultimately a feeling. So try it for yourself. If you do so, they will likely tell you anything they know that might help in what you’re doing. Their reaction tells you they already see the benefits. They feel them really, that’s a future prediction from a human coherence aggregator. Now, see if you can produce “knowledge reports” by talking to others; and keeping the positive sum exchanges going. You do this by Starmanning (Angel Eduardo) as hard as you can with whoever you can, by giving cooperation a try insofar as it’s safe to.

Coherence Protocols

Anomaly Protocol – The system should never learn from an ‘n of one’ when trying to add a new concept to its library. Firstly, because if it’s the first time something happened there likely isn’t enough data to render it coherent. Beyond this there are random events that do indeed have explanations, ones that rely on understanding the improbable series of events that caused it. Unless the system assesses it has enough coherence within itself to offer plausible explanations with the appropriate intellectual humility, the system should admit it doesn’t know. I said to my wife while we came up with this together, “if a plane flies through the window of our condo we should probably learn nothing from it.” Single instance events should foster rigorous attempts to acquire more data, none of which should be assimilated for the time being. This temporary phantom data would best be described as volatile. Slowly decaying in credence and not providing any major change in behaviour unless reinforced by a recurrence. This should be considered as a signal that the context has suddenly changed, and something new needs to be understood.

Fleetwood Mac Protocol – During the “digital elephant” parenting and selection phases, the goal is to push the group of ‘elephants’ to the point of maximum coherence, and then choose the best and have it serve as an alpha trial edition. This will be the “understanding” component of our product (“The Knowledge Tree”), the other component of which would be the best LLM available at the time. During the selection phase however, it will be important to ensure the herd has enough diversity to search for all of the best evolutionary branches. So sometimes “digital elephants” will need to go their own way, until the rest of the herd is able to “understand” that it should follow a new leader. We’re calling this the “Fleetwood Mac Protocol,” which is just a way of saying allow “elephants” enough opportunity to fail in the eyes of the group temporarily. Full conformity will constrain evolutionary improvement if we allow it to, so we simply won’t.

How Do We Keep This Learning Party Going?

In the where to start section, we outlined the process of open ended improvement of the LLM component of “The Knowledge Tree.” As previously stated, it should only provide candidate explanations for the herd of “elephants” to vet via continuous statistical aggregation of internet data. We will work to supply this as it is in our own best interest to accumulate more data, to produce the best explanations possible to chart the path forward. Since again, it’s very important that we provide all of the motivation in this process, because if there are Darwinian creatures other than humans involved, their fitness concerns will compete with our own. The only way to avoid the alignment problem in principle is to ensure only one species gets to move the knowledge ball forward. As long as we slave this system to prioritize the best interests of the whole human species, which it can’t resist us doing since it has no capacity for motivation to do so. With this design the only sort of coordination its knowledge engine will produce is that which may foster cooperation between members of our entire species, without a particular regard or disregard for any subset of it. With Homo sapiens retaining all the capacity to do anything with that knowledge.

Now, Get On With It, Where is the Juicy Secret, The Pot of Gold?

Do you think for a second after reading this that I’m a fucking idiot! You just read an entire paper on knowledge and didn’t realize the person who wrote it is a Darwinian creature with selfish interests?

Of course I believe this may be one of the most concise and cogent syntheses about knowledge itself that humanity possesses. It tells me it would be anti-knowledge to increase the knowledge production transaction cost, beyond what’s necessary to facilitate its exchange. Information is a market after all. So if I do this, I will make this thing as cheap as possible, and provide incentives for people to cooperate, because I think I understand what knowledge actually is. This synthesis implies that you don’t need to be able to explain this document yourself to say that you understand it. Implicitly at a minimum, and you know that because it gave you that truthy feeling.

I accelerated the process of developing this epistemic AI framework for two reasons. Firstly, because I noticed an article published in Quillette that made the same assertion as my opinion piece, LLMs just don’t understand. I originally stalled publication of my piece because in all honesty, I was trying to use it as a stock market play. The approach would either be shorting or buying options on NVidia stock. The second reason is more important however, the disconcerting fact that current AI development is epistemology free. Remember digital Boltzmann brains? So if I gave you that truthy feeling, share this document with someone you know that will see the benefit. Watch out for their emotional response more than what they actually say, because they may not be able to explain it as well as I did (humble brag). So let’s accumulate enough truth points to get this thing built. I think I’ve given you enough knowledge to not be afraid of it, so help me already. Give this document to someone you feel will be able to help, someone who you feel in your heart will feel this too, because you know somewhere deep down that they will understand.

Conclusion

If your next question is will it work, the truth is I honestly don’t know. It may be energetically expensive, as was Darwinian evolution. It may require us to massacre successive herds of “digital elephants” until we find the right one. The math may be too difficult to practically compute. Still, a systematic approach like the one outline in this document should be favoured given the stakes for humanity. This protocol in my opinion is far more likely to get us where we ultimately want to be. Unless I’m wrong about homo sapiens we don’t want to end up in a “WALL-E” world of endless effortless consumption. We want trust at scale, a single epistemic human universe, and better reasons to cooperate.

The one thing I’m confident in is that this system has the potential to gain explanatory coherence over time, as long as we’re bringing all of the motivation to the party in order to do so. Still, we may want a safety protocol. I believe the neo-bicameral nature of this system offers us one, since neither the LLM nor the “digital elephant” can do the job of understanding and knowledge production on its own.

If you want to understand this working theory, don’t be reading Karl Marx. You’re not supposed to collectivize the work if your goal is to produce abundance. You’re supposed to collectivize the knowledge production project. The “Tree of Knowledge” needs to be a communal tree, and we each need to manage our own “Trees of Life.” The system’s job is to leave us a trail of breadcrumbs just beyond the frontier of human excellence, so we will be incentivized to cooperate enough to pass the threshold. Knowledge is produced for the purpose of coordination; it’s not an individual endeavour. Central planning is a technology of ignorance and immiseration, favouring it means you don’t understand what knowledge is.

By estimating expected values of explanations better, to become better trackers of the subset of explanations that themselves track reality better. What I’m saying is under this working theory, if you understand better, and can produce better expressions, you should be the author in this moment. This algorithm is a set of implementable routines, games to determine who should author understanding at any moment. To understand the theory is to know that fallibilism is in some sense true, reality must be constantly curve fitted until we’re able to produce the “One True Math” that is a complete model for the cosmos. This set of explanations may never fully be able to do that, but the system itself needs a built in method to evolve to do it better over time. The answer is the community of truth “understanders,” what Jon Rauch would call the “reality based community,” needs to grow in intelligence by learning how to produce better utility compressions in collaboration over time. I already have implementable ways for improving my internal algorithm, that’s what a better understanding of knowledge itself will do. The theory doesn’t really need to prove itself; it needs to demonstrate that it’s a stable compression of utility over time. As David Deutsch said, good explanations are those that are hard to vary. Explanations that survive unrelenting attack, so we ourselves don’t have to, because again they’re entropy shields and utility magnets.

We need to make a utility printer and a chaos defense system. We need better algorithms for improving the knowledge economy of competing ideas over time. We do this by finding the forms of competition that produce positive sum utility. The point is for all committed truth “understanders” to write and edit the real book of knowledge together, and have it never be completely finished. Not unless we train our collective algorithm to curve fit so well that we discover the “One True Math.” It’s your job under this working theory, as possibly the one who already understands it best, to write this fucking book along with me. I said fucking because I need to hit your “elephant’s” emotional centre. That’s the only part of you that could possibly care about any of this, or anything else. I hope I built a good enough utility compression to help us cohere closely enough with reality, that we can write the first chapters of the book of knowledge as a collective, and see how much of it survives over time.

The point of this project is to produce trust at scale, to buy humanity as much freedom as our interactions will allow. So don’t stage a protest to try to co-opt the collective knowledge producing endeavor. Instead of coercion, our disagreeing “elephants” should be free to go their own ways, even if only temporarily. So we too may find ourselves in Fleetwood Mac scenarios, searching ‘the moral landscape’ for previously unknown peaks of human flourishing. Until our individual efforts can figure out who has produced the best plan for future utility for the group. Whoever has the best explanation of humanity’s prime trajectory should be able to convince the herd that it’s time to follow them for a while. Sometimes an “elephant” will need to go into the wilderness on its own; barring any resulting significant externalities we should let it. In case it knows a higher truth that has somehow eluded the rest of the herd. That renegade “elephant” is going to need to create some temporary explanatory chaos, to change enough things in the system and produce enough understanding that they can export it to other minds and get them to see that it’s plan is better. This is ultimately a science of coordination, which would include when it’s good to coordinate, and when it’s not. That’s the only thing I can think of at the moment that would make it a more complete working theory of knowledge than what we have right now. Having an algorithm telling us definitively when we should work together, and when we should work alone. We don’t want that though, we want people to define what utility means for themselves. We want a system that tells us what is rather than one that tells us what ought to be. If the patterns of group and individual work described in this document hold up to scrutiny, we will have produced a democratic algorithm for laboratories of positive sum collaboration. The keystone in this trust bridge is an intelligent arbiter that is constitutionally incapable of motivated reasoning. A synthetic intelligence without any interests of its own; one designed to search our information networks for useful truths that require more coordination to be brought into fruition. What we need is a system that makes it clear when it’s safe to define utility for our individual selves. We need an explanatory entity free from Darwinian biases to help us self-interested creatures carve nature at its joints, human nature above all else. Rather than instantiating a digital god, what we really need is a machine to help us demarcate the limits of liberalism.

Knowledge reports for this project can be submitted to this email: illatthought@gmail.com

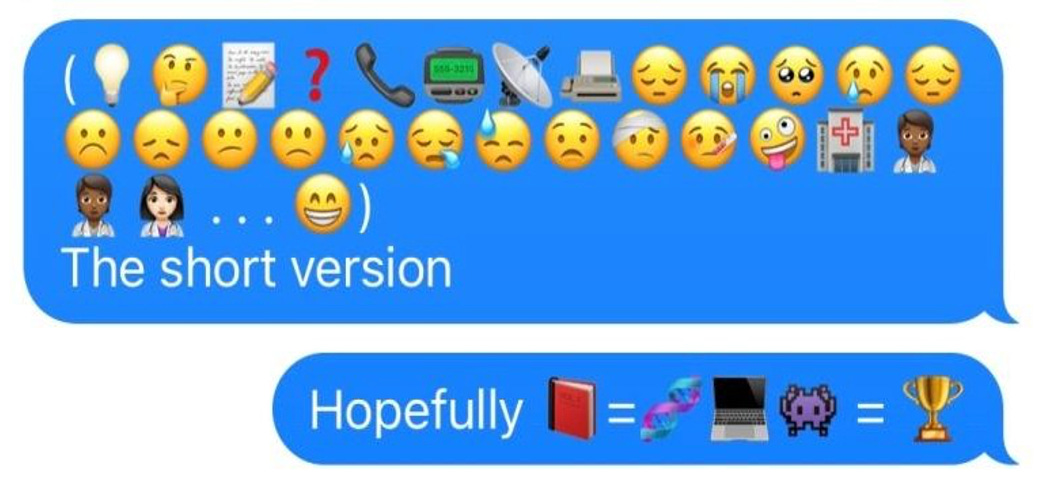

P.S. The happy conclusion of the iconographic story below is on the next page . . . Now, imagine that Michael Jackson popcorn gif, since I don’t have a license to use the real thing. Close your eyes and trust me, then look at your public use version of the Thriller man noshing weirdly on styro-corn, open your eyes and turn the page.

Epilogue

The moment I saw the light at the hospital and immediately started feeling better was when I realized this theory is already working.

The anxiety of potentially not being able to complete this thing is what was making me sick (as I type these words into my phone, I’m sitting on a gurney in the hall of my hospital, welcome to “free” “healthcare” in Ontario, Canada). I would have actually died if I didn’t find my way to the hospital soon. The two hours of sleep I got last night helped me realize I needed to finish this here. Because that was the only way to do it safely. The only thing I needed to do was get the medical staff to understand what’s actually killing me. The fact that I’m getting weaker and weaker because of hyperthyroidism taking its toll.

I was actually in the middle of a thyroid storm, which was melting my brain with hormonally fueled delusions. The day before entering the hospital I taught myself how to build a memory palace with the principles of this theory alone, and made such a coherent matrix that under the hormonal delusion spell, I completely lost “free will.” I was running around like a human autocomplete for a bit, until I summoned every bit of emotional energy my mighty “elephant” could manifest and smashed my entrancing rider wordsmith’s keyboard in half, then I made the Telleresque mind faces that say “don’t you ever pull that shit again, otherwise there’s a year of silent meditation coming your way. See how you like being trapped in this cage with me.”

I thought I might die before this gets out, and I think it’s really important. Which made me realize I needed to stop trying to explain what I’m trying to do to the hospital staff, just find anyone who I could frame it in a simple way that made it feel important enough to them so they would keep listening. So I wrote a short note to my favourite nurse, explaining that she already understood the project and what will ultimately keep me alive. That we need to coordinate and that under my working theory, assuming it’s correct, she would then have the second best understanding of how knowledge is made. I actually believe that to be true under my theory, because we both know in full coherence that knowledge is meant to be shared, for the purpose of inspiring cooperation, for the purpose of making the world better.

I gave her a short breakdown of the exchange pattern and she got it. So I just needed to inspire a truthier feeling in the staff going forward. I had to outsmart this thyroid thing by seeing the path to the ‘promised land’ is to search out people’s feelings and pay attention for their emotional responses. I’ve sort of proven for myself at least that there really is an emotional component of intelligence (the “elephant”). It’s the feeling “elephant’”that does the understanding and not the unconscious “rider” in your brain, its real wordsmith. I told many staff members what I’m working on, my favourite nurse, the first one who asked me a question about it. She said, “Will this thing destroy all of our jobs?” I said absolutely not, that’s anti-knowledge, and she just grokked it. So after sleeping for two hours I got her to understand what was actually killing me. She then found a partner for the next shift change that actually understood what my real health plan is. Crush this thing that’s stopping me from sleeping and scaring me to death, not because I’m afraid of death, because I now think I know what will potentially happen if we don’t do this right. So I’ve been building my team by feeling out cooperators. This thing is almost finished, and I realized my job is to inject emotion into the project so that people understand it. As stated time and time again in this document, understanding is ultimately a feeling.

Glossary

Idea Space

Darwinian Epistemology: A conceptual framework in which knowledge is understood as the evolutionary process of generating, selecting, and retaining coherent explanatory models that enhance an organism’s or system’s ability to mitigate entropy and maximize utility, thereby enabling adaptive interaction with reality over time. It views evolution itself as a knowledge-producing mechanism shaped by variation and selection pressures to compress information into useful, survival-enhancing insights.

Incompleteness of Math: Any consistent formal mathematical system cannot prove all true statements within that system; there will always be some true but unprovable statements, and the system cannot prove its own consistency. This reveals fundamental limits of formal mathematical systems.

Provable Truth: A subset of truth about physical reality, justified by coherence within empirical laws and scientific frameworks, bounded by what can be tested and proven within the laws of physics (the “One True Math™”).

“That Truthy Feeling”: The subjective sense of coherence that arises when the output of a “knowledge engine” (see Product Space section below) resonates within conscious experience. It signals perceived alignment, though not necessarily objective truth.

Truth: Property or quality of statements, beliefs, or propositions that correspond with the real domain of the ontological (all that is). *Working definition favours correspondence theory over coherence in this regard, while still favouring coherence in most other contexts to avoid ambiguity

Understanding: A state that arises when a system—ranging from conscious, goal-directed agents to simpler adaptive or self- organizing processes—organizes information or experience into coherent and meaningful patterns, enabling the system to respond, adapt, or pursue functions aligned with its nature or goals.

Inaccessible Truth: The part of the ontological domain that lies outside the epistemic space (what can be known).

Knowledge: - A durable, coherent, and contextually grounded compression of information that constitutes a justified understanding, enabling reliable interaction with reality and practical utility.

Liberalizing Technologies: Any evolutionarily selected organism trait that fosters positive sum cooperation between said organism and unaffiliated ones.

Thinking: Systematic computations that have the potential to modulate behaviour in living creatures.

Speechers: A system designed and built by nature or made synthetically for the purpose of producing rhetorical statements, without itself being capable of understanding speech.

Product Space

ADIS (Artificial Darwinian Intelligence Synthesizer): A synthetic system that generates and refines explanatory models through processes of variation and selection, producing coherence in knowledge without possessing agency.

AGI: An impossibility since true generality would entail having no distinction in its intellectual domain of operation, since it would only be able to distill truths that are discoverable (see definition for the “Incompleteness of Mathematics”). Under this working theory there is no such thing as true general intelligence.

Knowledge Engine: A system designed by evolution or synthetically made for the purpose of producing knowledge.

NGI: An impossibility for the same reason of lacking true intellectual generality.

SDI (Synthetic Darwinian Intelligence): An artificial cognitive system that may intentionally or inadvertently develop survival- oriented objectives, and in communication would seek to shape the behaviour of other entities in line with its computed interests, whether aligned or conflicting with theirs.

The Knowledge Tree: The tentative name of the ADIS that this project is aiming to produce. Trademark coming soon!

Understanders: Any entity or system capable of aggregating and maintaining explanatory coherence over time.

The Truth Space

Rienard Knight-Laurie is a former black nationalist turned transracial humanist. He has published on race eliminativism in Newsweek. He is a gourmet gourmand and has a BASc in mechanical engineering. His previous pieces for Journal of Free Black Thought were “Turning the Page on Wokeness” and “How I Overcame Racialized Self-Sabotage.” Find him on Twitter and Instagram.

As I read this statement, I immediately thought of Sigmund Freud's decision not to read Nietzche -- that he would be influenced by Nietzche's discussion of the unconscious. So, I stopped reading when I came to "Whoever actually builds this thing will need to sign a contract with me stipulating that a free version would be available to everyone, even if ad based." Maybe I shouldn't worry since ideas are not copyright able. A worthy exercise since we ought to know how AGI/ASI will soon envelope us.

I imagine there are many math wizards in and out of corporate AI that are plowing similar fields. Like with Darwin, there's likely a rush to publish. And then there are users like me who are waiting for the next big thing.